Why we invested in Callosum

by Ian HogarthThe infrastructure buildout behind frontier AI is one of the most impressive engineering feats in the modern era, with the amount of compute needed to train the most powerful models increasing over 1 billion times between 2010 and 2024.

But if applied AI continues to scale across the economy, with more complex and varied architectures, I believe that the compute stack of 2035 will look fundamentally different to how it does today.

In 2026, AI is primarily built on three full-stack solutions - the NVIDIA stack, the Google stack or the Huawei stack. They dominate the market, concentrating control of AI compute into the hands of a small group. This stack has been built on the assumption that a homogeneous system - scaling a single model on identical chips - will deliver ever-greater capability and intelligence.

But real-world problems are heterogeneous: requiring different capabilities to solve complex tasks, meaning we will increasingly use multiple models optimised as a system, rather than solely one homogeneous model.

Founded by Danyal Akarca and Jascha Achterberg, London-based Callosum is building systems-level software for this new paradigm. It lets AI developers run multiple models on a wide range of chips, and allows them to extract performance benefits from new, AI-optimised hardware that performs better than existing tech for specific jobs.

By enabling a more heterogeneous compute stack, Callosum’s orchestration layer makes compute more efficient and more powerful, while unlocking whole new classes of AI by allowing different types of chip to be used together.

A rebel alliance

Developing a new heterogeneous compute paradigm is no mean feat, but there are significant market players who form a kind of rebel alliance that wants the stack to fragment.

Cloud providers running AI data centres - a market growing from $130bn today to $2tn by 2034 - have every reason to want a more heterogeneous compute market. Diversifying beyond any single supplier reduces their dependency risk, accelerates their capacity buildout and lowers costs while improving energy efficiency. Ultimately, it could unlock higher performance, lower cost and more capabilities for their end users.

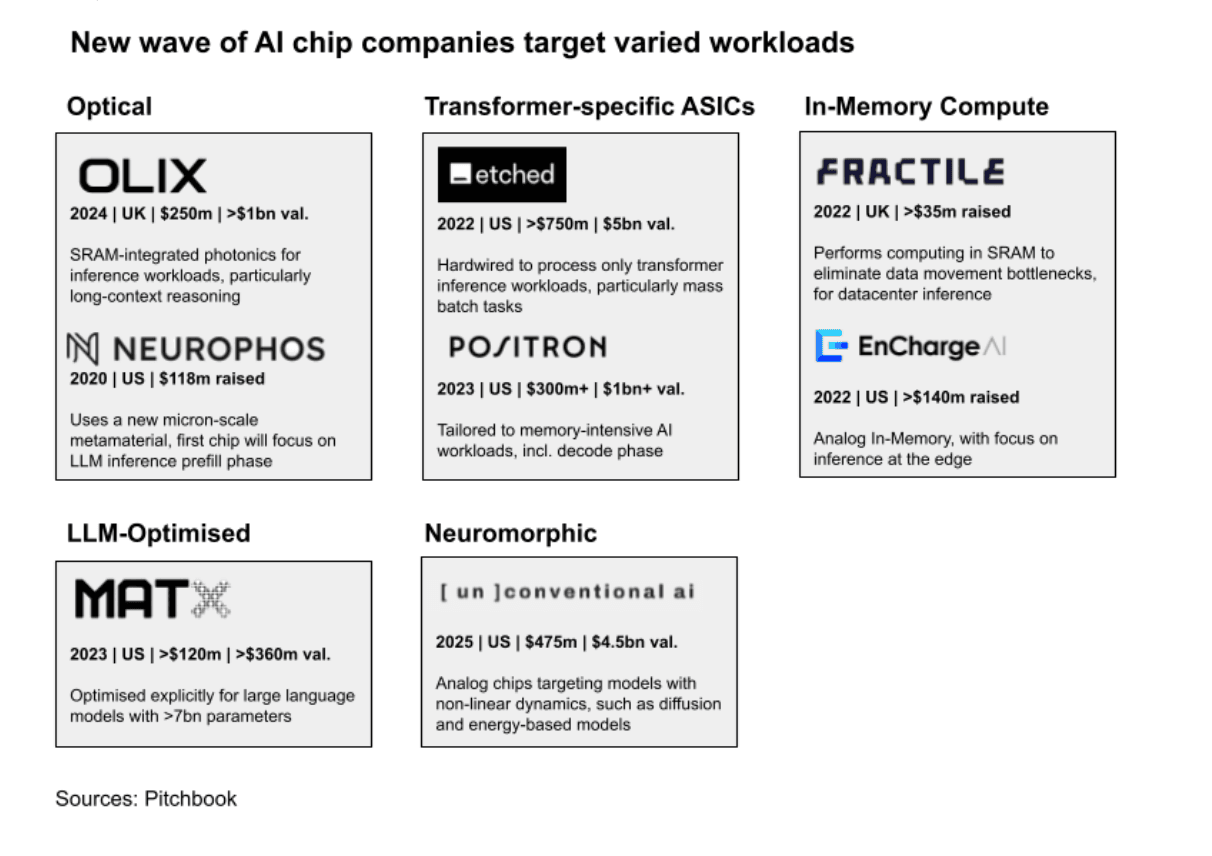

Alongside large chip makers like AMD and Intel who want to increase their market share, there are well-financed newcomers trying to gain a foothold in AI compute. US funding for semiconductor startups hit a record $6.2bn in 2025, up from $2.3bn in 2020, as investors bet that new architectures will deliver greater performance and efficiency than can be delivered with today’s stack.

Startups like Etched, Fractile, and MatX build hardware specifically designed for AI workloads but, while these chips tend to excel at some tasks, they aren’t necessarily able to do everything well.

Callosum can be the missing piece. Its system extracts performance benefits from different chip architectures and optimises each one for the task at hand, producing outcomes that are greater than the sum of the parts, letting AI builders do things they couldn’t before.

The team has already shown that this works for complex, real-world tasks. In the case of autonomous computer use, which requires vast amounts of information and many different types of decisions, Callosum’s system already delivers 2x accuracy, 7x speed and 4x lower cost compared to homogeneous compute.

The brain power to rethink compute

This vision for heterogeneous compute comes from cofounders with Cambridge PhDs in computational neuroscience, whose work on how brain intelligence intersects with computing and AI has been published in several Nature journals. Danyal and Jascha are applying that deep thinking to fundamentally question how compute works, based on the conviction that powerful AI will develop in a similar way that human intelligence happened.

Our brains didn’t develop intelligence by repeating the same neuron billions of times, but by combining many different types of cells, signals and specialised circuits. Danyal and Jascha have been able to translate that principle into a working product by being equally obsessive about hardware and software, thinking about computation from both sides of the equation.

As NVIDIA and its CUDA product show, some of the best tech companies in the world combine software and hardware excellence. Doing either one is hard, but spanning both is particularly tough and requires an unusual set of capabilities.

Jascha began working with DeepMind as part of his PhD, before spending some time at Intel working on chips. Danyal, who leads a team at Imperial’s flagship AI initiative i-X, was funded by ARIA to research neuromorphic computing.

The two are very complementary characters for the hard task of building systems-level software.

Dan has an very infectious and enthusiastic quality to his energy which persuades you that he can will something very different into existence. It’s the kind of energy that will rally a group of exceptionally ambitious people towards a new way of doing things and bring an ecosystem with them.

Meanwhile, one way to describe Jascha is tasteful. He has a really good sense of judgment and an almost artistic sensibility that he’s able to apply to very hard computer science. He knows what to build and what not to.

They are also both a part of Meta’s Open Compute Project, a nonprofit consortium of leading hardware vendors. This experience has helped them build a strong network in the high-performance compute space, and an understanding of what cloud providers and chip makers want.

The VMware of compute

In a new, more heterogeneous paradigm of compute, the chip market of the future will naturally have winners and losers on the hardware side.

What’s attractive about Callosum as a venture investor is that it’s naturally less capital intensive than building a new chip. It sits above the hardware layer, across the ecosystem. And it can win by unlocking the collective potential of that ecosystem, regardless of which providers ultimately prevail.

One analogy here is VMware. In the 2000s, enterprises were dealing with fleets of underutilised physical servers. VMware solved this not by building a better server, but by creating a software layer that sat above the hardware and let multiple workloads run on any machine.

Callosum can do something equivalent for heterogeneous compute, and the space is ripe to build a very large company that can also enhance European semiconductor sovereignty.

With London-based photonics startups like OLIX and Oriole, the UK is turning into a hotspot for next-generation AI compute, and Europe has a genuine shot in playing a leading role in the future of this market. Callosum’s orchestration layer can play a key part in making that a reality.

Danyal and Jascha are making an ambitious, contrarian bet: that AI won’t run on a single dominant chip stack, but on a more diverse hardware landscape, where incumbents like NVIDIA work in concert with other paradigms, and smaller players can gain power faster.